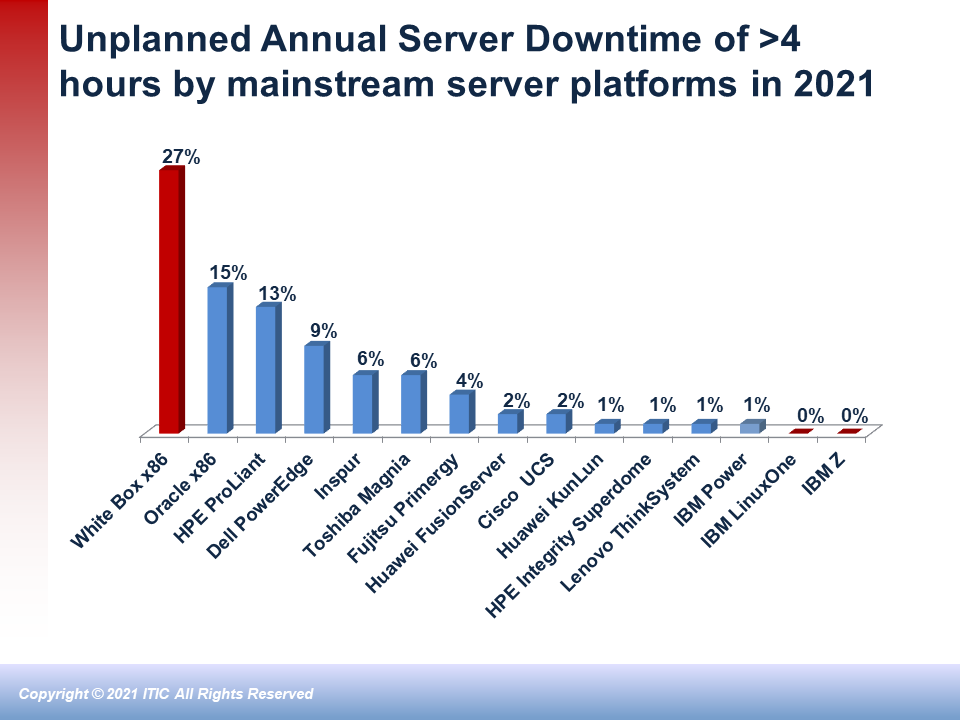

IBM, Lenovo, Huawei, Hewlett-Packard Enterprise and Cisco hardware are the most secure and reliable servers. These platforms experienced the fewest successful hacks and recorded the least amount of unplanned downtime due to data breaches among mainstream servers in the last year.

Those are the results of the latest ITIC Global Server Hardware, Server OS Reliability and Security survey which polled over 1,000 businesses worldwide across 28 different vertical market sectors from October 2020 through March 2021.

The most recent ITIC survey statistics indicate that reliability and security are closely intertwined and even symbiotic. The top five most reliable server platforms: the IBM Z, the IBM Power Systems, Lenovo ThinkSystem, Huawei KunLun and Fusion Servers, the HPE Superdome Integrity and Cisco UCS (in that order) also boast the strongest security.

ITIC’s most recent Global Security poll similarly found that IBM, Lenovo, Huawei and HPE mission critical servers experienced the lowest percentages of downtime due to successful security hacks and data breaches.

The IBM Z mainframe outpaced all other server distributions and is in a class of its own as it achieved its most robust security and reliability ratings to date in the latest ITIC study.

Only a miniscule – 0.3% – of IBM Z high end servers, suffered a successful data breach. Among other mainstream hardware platforms, just four percent (4%) of IBM Power Systems and Lenovo ThinkSystem users reported their systems were successfully hacked, while five percent (5%) of Huawei KunLun and HPE Integrity Superdome server customers reported a security breach between March 2020 and April 2021.

Just over one-in-ten or 11% of Cisco UCS servers were successfully hacked. Cisco’s hardware performed extremely well, particularly when one considers that many of the UCS servers are deployed in remote locations and at the network edge, which frequently are the first line of defense and take the brunt of hack attacks. Unbranded White box servers were the most vulnerable to security penetrations: 44% of ITIC survey respondents reported they were successfully hacked.

The global pandemic sparked a wave of COVID-19 related data breaches, ransomware, phishing, Business Email Compromise (BEC), CEO fraud and attacks that continue unabated.

Overall, ITIC’s survey findings indicate that there is a clear and widening gap in server hardware security and reliability among the top performing platforms and the most insecure offerings. The global pandemic sparked a wave of COVID-19 related data breaches, ransomware, phishing, Business Email Compromise (BEC), CEO fraud and attacks that continue unabated.

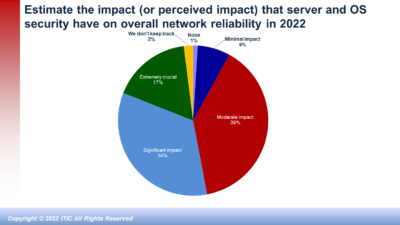

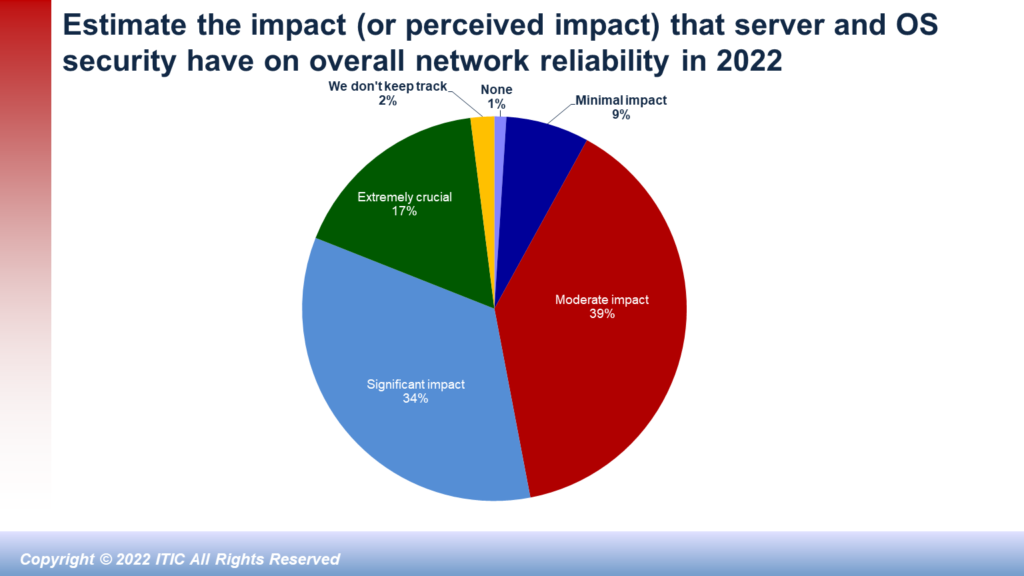

Security and reliability issues are closely intertwined: a successful data breach immediately compromises server, application and network uptime and availability. Security will likely persist as the chief threat that causes expensive unplanned downtime and outages.

Survey Highlights

Notably, despite a 31% spike in security hacks and data breaches during the COVID-19 pandemic over the last 16 months, IBM, Lenovo, Huawei, HPE and Cisco maintained their top positions as the most reliable and secure server platforms.

Additionally, the top five server distributions achieved the best security ratings of among all mainstream server hardware platforms in every security category in ITIC’s latest poll, including:

- The least number of attempted security hacks/data breaches

- The fewest number of successful security hacks/data breaches

- The fastest Mean Time to Detection (MTTD) from the onset of the attack until the company isolated and shut it down

The strong security results posted by IBM, Lenovo, Huawei, HPE and Cisco (in that order) are especially noteworthy since they occurred during the height of the COVID-19 global pandemic. Some 31% of ITIC survey respondents said their servers, operating systems and critical business applications suffered successful penetrations by myriad security hacks and data breaches since the outset of COVID-19 in early 2020. This is an increase of 12 percentage points, up from the 19% in ITIC’s 2020 Global Server Hardware, Server OS Reliability survey.

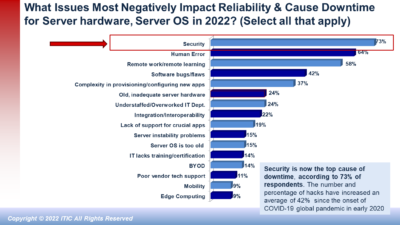

Security is a core component of every organization’s network. Robust security is even more crucial in the COVID-19 era which ushered in a variety of new scams. Some 69% of organizations cited security and data breaches as the greatest threats to the reliability of server, application, data center, network edge and cloud ecosystems. The hacks themselves are more targeted, prevalent, pervasive and pernicious: They are designed to inflict maximum damage and losses on their enterprise and consumer victims.

Data Breaches are Big Business

Data breaches are big business and a primary business for the burgeoning professional hacking community. A successful hack is expensive on many levels. In 2020, the cost of a data breach averaged $3.86 million, according to the 2020 Cost of a Data Breach Study jointly conducted by IBM and the Ponemon Institute[1]. This represents a 10% increase since 2015.

ITIC’s latest survey data also indicates that the Hourly Cost of Downtime now exceeds $300,000 for 88% of businesses. Overall, 40% of mid-sized and large enterprise survey respondents reported that a single hour of downtime, costs their firms over one million ($1 million). A data breach that occurs during peak usage hours and interrupts crucial business operations can cost businesses millions per minute.

Besides the obvious monetary losses due to productivity and disrupted operations, businesses must factor in amount of manpower hours and the number of IT and security administrators involved in remediation efforts and full return to operation. Companies must also determine whether or not any data or intellectual property (IP) was lost, stolen, damaged, destroyed or changed. Organizations must also add in the cost of any litigation as well as potential civil or criminal fines/penalties associated with security incidents and data breaches. Some costs, like damage to an organization’s reputation are incalculable and may result in lost business.

Hackers pick and choose their targets with great precision and are quick to take advantage of every opportunity. The COVID-19 pandemic is a prime example. Hackers immediately set their sights on teleworkers and remote learning students taking online and Zoom classes. They zeroed in on so-called “soft targets.” Local and state municipalities; small and mid-sized school districts, hospitals, health care clinics, doctors’ offices and branch bank offices that may lack full-time onsite security and IT administrators and may not have installed the latest security.

It’s no surprise that vendors like IBM, Lenovo, Huawei, HPE, which perennially achieve top server reliability ratings were also among the most secure hardware platforms. These vendors and more recently Cisco, have made server security – and in Lenovo’s case server, PC and laptop security – a top priority and have invested heavily in bolstering the inherent security of their product offerings over the last several years. So when the Covid-19 pandemic hit, they already had strong, embedded security and this stood them and their customers in good stead.

The most secure server hardware platforms experienced the fewest successful security breaches. The IBM Z running the z/OS and RHEL Linux and IBM LinuxONE III respondents all said those platforms had no successful security hacks over the 16 months. They were followed by the IBM Power Systems and Linux ThinkSystem servers with one each; Huawei KunLun which averaged two hacks; the HPE Integrity with three successful penetrations and Cisco’s UCS servers with seven data breaches. The unbranded White box servers were the most porous, averaging 20 successful data breaches in the past 16 months.

Data breaches are big business. And they are expensive. The average cost of a data breach in 2020 is $3.86 million, according to the latest 2020 Cost of a Data Breach Study jointly conducted by IBM and the Ponemon Institute[2]. While the report indicates that the average data breach cost declined by a slight 1.5% compared with 2019’s study, the $3.86 million figure still represents a 10% increase since 2015.

A DTEX Systems Report found that “only 30% of organizations were prepared to secure a complete shift to remote work.” The DTEX Systems study also found that almost 75% of organizations are concerned about the security risks introduced by users working from home and 73% of businesses admitted they have partial or no visibility into user activity if their VPN is disabled by remote workers. Another alarming finding is that teleworkers use their work laptops for personal use; with 25% of respondents acknowledging this increases the risk of drive-by-downloads, with 15% saying their firms are more susceptible to Phishing attacks.

Conclusions and Recommendations

Security is now the number one issue that negatively undermines the reliability of server hardware, server OS and business critical applications. All organizations should make security a priority and work closely with their vendors to mitigate security risks to an acceptable level.

Every added second and minute of server downtime and application unavailability negatively impacts business operations, employee productivity and revenue.

No server platform, server OS or business application will provide foolproof security. However, IBM, Lenovo, Huawei, HPE and Cisco which are among the most reliable server platforms also provide the greatest levels of inherent security. This enables customers to achieve the greatest economies of scale and safeguard their sensitive IP and data assets. That said, security is a 50/50 proposition. While vendors must deliver robust security, corporations are responsible for maintaining the reliability of their server and overarching network infrastructure. ITIC strongly advise businesses to:

- Take inventory of all devices and applications across the ecosystem.

- Conduct security vulnerability testing at least annually and work with third party experts.

- Have a remediation and governance plan in place in the event your firm is successfully hacked.

- Ensure that Security and IT professionals receive adequate training.

- Ensure that end users as well as contract workers and temporary employees receive adequate security awareness training on the latest Email and Phishing scams and ransomware threats.

- Implement strong security policies and procedures and enforce them.

- Regularly replace, retrofit and refresh server hardware and server operating systems with the necessary patches, updates and security fixes as needed to maintain system health.

- Keep up-to-date on the latest security patches and fixes.

- Ensure that your firm’s hardware and software vendors and cloud vendors meet or exceed the terms of their Service Level Agreements (SLAs) for agreed upon security and reliability levels.

[1] “2020 Cost of a Data Breach Study,” IBM and the Ponemon Institute. URL: https://www.ibm.com/security/data-breach

[2] “2020 Cost of a Data Breach Study,” IBM and the Ponemon Institute. URL: https://www.ibm.com/security/data-breach